Nice review of Albion’s Seed

Nice review of Albion’s Seed

In my first post on creating an ebook I discussed the physical manipulation required to convert a paperback book into images and ultimately text files. Now I want to convert the text files into an ebook. Here is the sequence of events:

I start with my dataframe listing all files and their page numbers and read each individual page text file into an R list.

1 | library(tidytext) |

By counting the number of rows associated with each chapter in the dataframe, determine the number of pages per chapter then combine those pages into a list by chapters, 17 chapters total for Picnic. I will not annotate individual pages with page numbers, but will combine all pages into a chapter and let the epub format handle the flow.

1 | pages <- vector(mode="list", length=nrow(d)) |

OCR wil have introduced many misspellings, some of which can be corrected in bulk. I also want to remove ligatures, as this will interfere with word recognition when I am performing spell checking. Finally, the type setting process introduces many hyphenated words at the end of a line of text, to preserve readability. I want to remove these and let the epub flow text instead.

I create the method replaceforeignchars which will replace ligatures and common misspellings. The replacements to be executed are tabulated in the table “fromto”:

1 | ##define method |

With pages combined into chapters remove hyphenated words at the end of lines.

1 |

|

In practice this didn’t work so well. Tokenizing a sentence removes capitalization which then has to be manually corrected. There were also occasions where a line was duplicated and this had to be manully corrected. I decided to remove hyphens manually while editing the text.

Next I print out each chapter as a page with xhtml annotation:

1 | for(i in 1:16){ |

A useful command is saveRDS which allows for the saving of R objects. Here I save my list, which I can read back into an object, modify, and resave.

1 | saveRDS(chapters2, paste(getwd(), "/chptobj/chptobj.list", sep="")) |

The package qdap provides an interactive method, check_spelling_interactive, for spell checking. A dialog bog will pop up for each unrecognized word in turn, providing you with a pick list of potential corrections or the opportunity to type in a correction manually.

1 | library(qdap) |

I found that the pick list often did not provide the appropriate choice, capitalization is not preserved, and Picnic has many slang words that forced interaction with qdap too frequently. I decided to read through the text and correct manually.

Here is qdap flagging the French ‘alors’. There are settings for qdap that may improve the word choices available, but I did not spend the time investigating.

Once the chapters have been edited and proof read, it is time to create the epub. An epub is a zip file with the extension “.epub”. It also has a well defined directory layout and required files that define chapters, images, flow control, etc. ePub specifications and tutorials are readily available on line. Here I will show examples of some of the epub contents.

File toc.ncx

1 | <ncx xmlns="http://www.daisy.org/z3986/2005/ncx/" version="2005-1"> |

File metadata.opf

1 | <package xmlns="http://www.idpf.org/2007/opf" version="2.0" unique-identifier="bookid"> |

File container.xml:

1 |

|

File - an example chapter:

1 |

|

Once the files are in order they are zipped into an epub. Navigate to the directory containing your files and:

1 | zip -Xr9D Picnic_at_Hanging_Rock.epub mimetype * -x .DS_Store |

Some of the switches I am using:

X: Exclude extra file attributes (permissions, ownership, anything that adds extra bytes)

-r: Recurse into directories

-9: Slowest but most optimized compression

-D: Do not created directory entries in the zip archive

-x .DS_Store: Don’t include Mac OS X’s hidden file of snapshots etc.

The next post in this series discusses sentiment analysis.

One of my all-time favorite movies is Picnic at Hanging Rock by Peter Weir. Every scene is a painting, and the atmosphere transports you back to the Australian bush of 1900. The movie is based on a book by Joan Lindsay, who had the genius to leave the plot’s main mystery unresolved. During her lifetime she never discouraged anyone from claiming the book was based on real events. After her death in 1984 a “lost” final chapter was discovered, which purportedly resolved the mystery. Most (including myself) believe the final chapter is a hoax.

Recently on R-bloggers there has been a run on articles discussing sentiment analysis. I thought it would be fun to text mine and sentiment analyze Picnic. I purchased a paperback version of the book years ago, which I read while on vacation.

My book is old and the pages are yellowing. Time to preserve it for prosterity.

In this post I will discuss converting a paperback into an ebook. Future posts will discuss the text mining/sentiment analysis. The steps are:

As an aside, one of the most impressive crowd sourcing pieces of software I have seen is Project Gutenberg’s Distributed Proofreaders website. Dump in your scanned images and the site will coordinate proofreading and text assembly. Procedures are in place for managing the workflow, resolving discrepancies, motivating volunteers, etc. Picnic doesn’t qualify for this treatment as it is not in the public domain. I will have to do it myself.

I used a single edge razor blade. Cut as smoothly and straight as possible. Keep the pages in numerical order.

I have an HP OfficeJet 5610 All-in-One multifunction printer equipped with a document feeder. I am working with Debian Linux, so I use Xsane as the scanning software. Searching the web I find that there is a lot of discussion concerning the optimum resolution, color, and file format that should be used for images destined for OCR. I decided on 300dpi grayscale TIFF, which in retrospect was a good choice. I load one chapter at a time onto the document feeder positioned such that the smooth edge enters the feeder first. This results in odd pages being rotated 90 degrees counterclockwise, and even pages being rotated 90 degrees clockwise. Xsane will auto-number the images, but I will supply a prefix following a convention: “chptNN[e|o]-NNNN” where e|o is e or o standing for even or odd page numbers, NN for the chapter number and NNNN is the Xsane supplied image number. The image number will start at 1 for each set (even or odd) of chapter pages.

Once all images are scanned, I will need to rotate either 90 or 270 degrees to prepare for OCR, using the rotate.image function from the adimpro package. I use the following code, depositing the rotated images in a separate directory:

1 | library("adimpro") |

Next perform OCR on each image. I use tesseract from Google which has a Debian package.

1 |

|

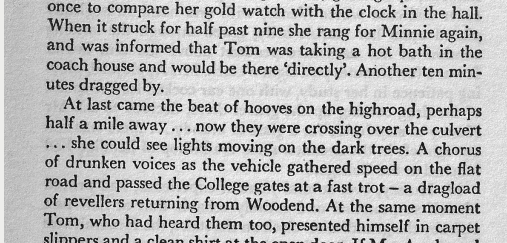

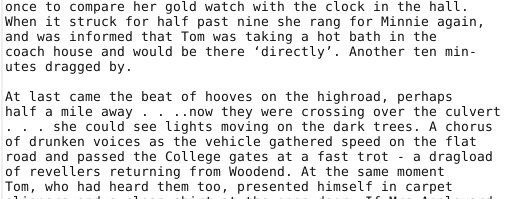

Seems to work well. Here is a comparison of image and text:

I need to create a table of textfile name, page number, words per page etc. to coordinate assembly of the final text and assist with future text mining. Here are the contents of the all.files variable:

1 | > all.files <- list.files(paste(getwd(), "/textfiles", sep="")) |

Make a data.frame extracting relevant information from the filenames:

1 | all.files <- list.files(paste(getwd(), "/textfiles", sep="")) |

Read in all the pages of text using the read_lines function from the readr package:

1 | library(readr) |

If I look at some random pages, I can see that usually the second to the last line has the page number, when it exists on a page:

1 | >pages[[10]] |

Many of the page numbers are corrupt i.e. there are random characters thrown in by mistake by the OCR. I make note of these characters and use gsub to get rid of them. Some escape my efforts, but enough are accurate that I can compare the extracted page number to the expected page number, determined by the order in which the pages were fed into the scanner.

I will extract the second to the last line (stll) and include it in my table:

1 | pnumber <- list()[1:190] |

For the expected page number, create a column “chpteo” which is the concatenation of chptr number and e or o for even odd. Sequentially number these by 2.

1 | d$chpteo <- paste0(d$chpt, d$eo) |

Here is what my data.frame “d2” looks like:

1 | > head(d2) |

“page” is the expected page number based on scanning order.

“pnumber” is the OCR extracted page. Compare them:

1 | > d2[,c("page","pnumber")] |

Looks good. There are some OCR errors but enough come through to verify that the order is correct. Now I can sort on page and use that order to assemble the ebook. Read each page file and append to an output file. Since I want to be able to refer to images to correct problems, I also insert the image information between text files:

1 |

|

Here is what a page junction looks like:

You can see the page number when present, which will provide a method to confirm the correct order. The file name is included, which will allow me to go back to the original image during the proofreading process to verify words I may be uncertain of.

It would be nice to have the image and text juxtaposed during the proofreading process. To see what this looks like, take a look at Project Gutenberg’s Distributed Proofreaders website. I will have to read on a device that allows me to refer to the images when needed. Once the proofreading is complete, I will be ready for sentiment analysis.

The next post in this series discusses text manipulation.

Associate GPS coordinates with a street address

1 | ;;;; Guile/Lisp Setup |

From https://ambrevar.xyz/guix-advance/

Install guile 2.2.7; can’t find libffi

https://guile-user.gnu.narkive.com/qcZSj1pL/libffi-not-found-even-if-installed-in-default-path

$ find /usr -name ‘libffi.*’

/usr/lib/libffi.dylib

/usr/local/lib/libffi.5.dylib

/usr/local/lib/libffi.a

/usr/local/lib/libffi.dylib

/usr/local/lib/libffi.la

/usr/local/lib/pkgconfig/libffi.pc

/usr/local/share/info/libffi.info

However, it did not help, and the ./configure still fails with the very same error message.

Did you try running configure as something like

‘PKG_CONFIG_PATH=/usr/local/lib/pkgconfig ./configure’

My command:

PKG_CONFIG_PATH=/usr/lib/x86_64-linux-gnu/pkgconfig ./configure

In .bash_profile (not .bashrc)

(see https://guix.gnu.org/manual/en/html_node/Invoking-guix-environment.html#Invoking-guix-environment)

GUIX_PROFILE=”$HOME/.guix-profile” ;

source “$HOME/.guix-profile/etc/profile”

Missing development packages error

sudo find / -name “guile*.pc”

Look at the directories, are they present when

echo PKG_CONFIG_PATH

Say you find one in /usr/local/lib/pkgconfig

export PKG_CONFIG_PATH=/usr/local/lib/pkgconfig in .bashrc

sudo find / -name “guile*.pc”

Is it in the path:

scheme@(guile-user)> (search-path %load-path “dbi/dbi.scm”)

#find / -wholename dbi/dbi.scm

note that I find /usr/local/share/guile/site/2.2/dbi/dbi.scm so to use-modules (dbi dbi) I must in .bashrc:

export GUILE_LOAD_PATH=”/usr/local/share/guile/site/2.2:/home/mbc/.guix-profile/share/guile/site/3.0${GUILE_LOAD_PATH:+:}$GUILE_LOAD_PATH”